End-to-end LLM-driven scientific workflows

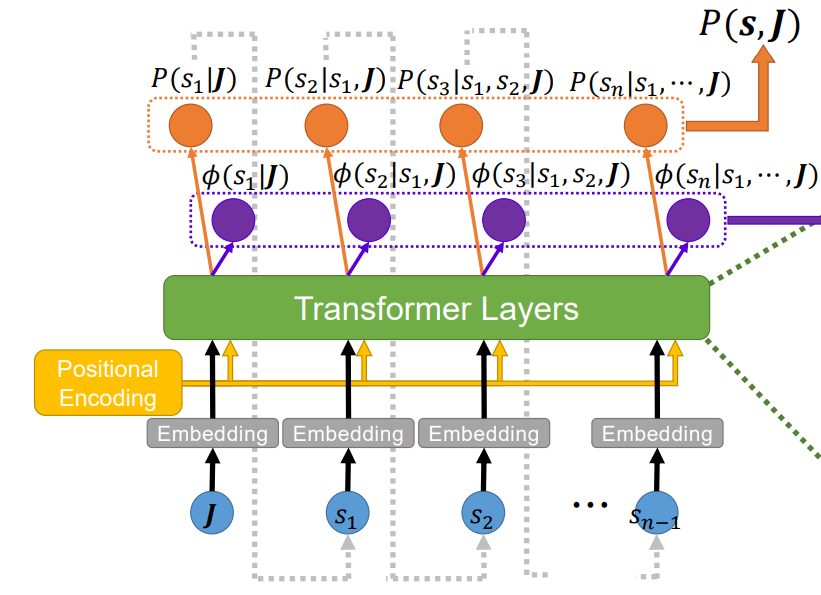

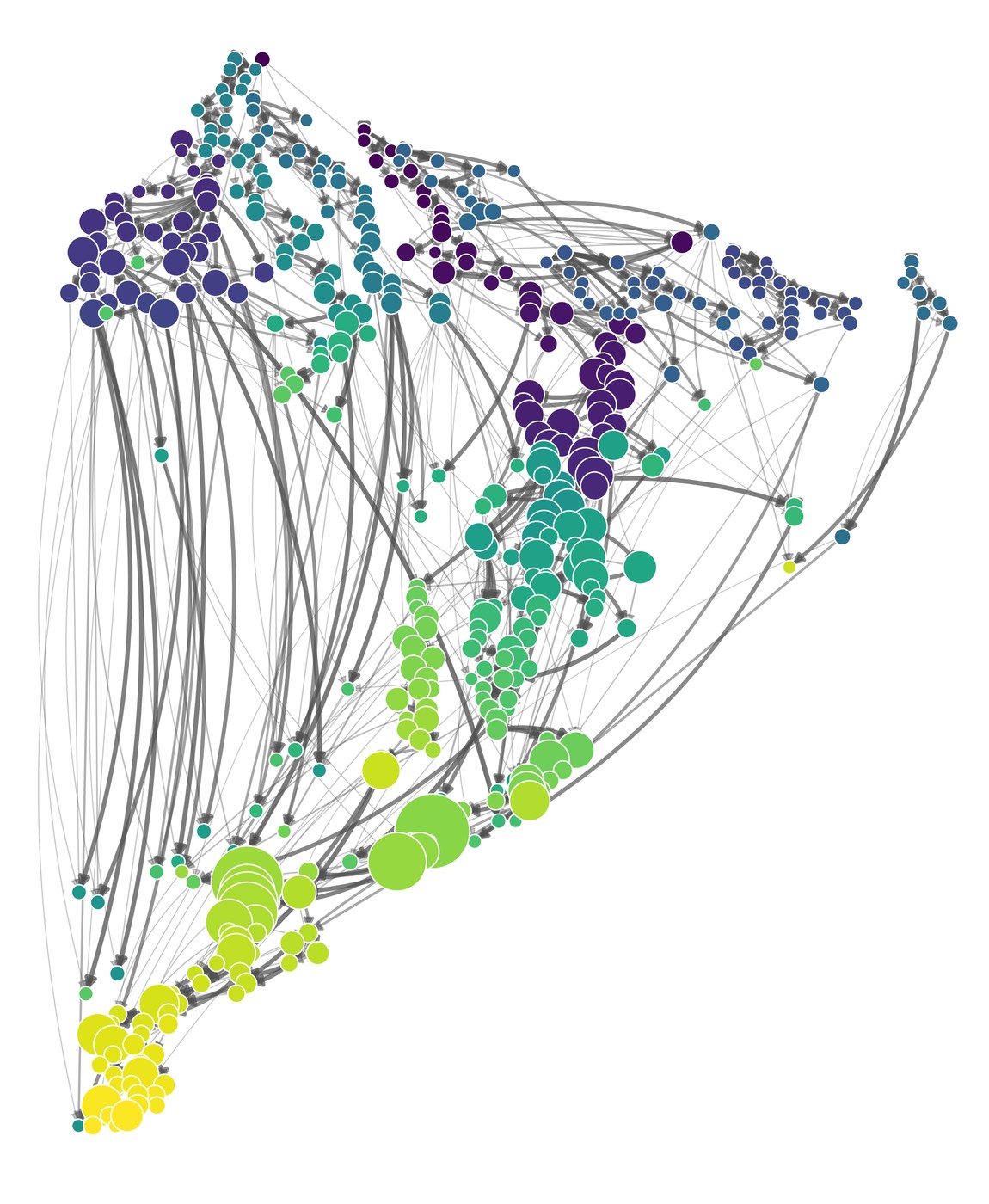

The newest pillar — and, I think, the highest-leverage. The starting question: what if we treat the act of scientific discovery itself as an optimization problem over strategies, with the LLM as the proposal distribution and experimental feedback as the gradient signal? The first installment, Scientific discovery as meta-optimization, tests this framing on combinatorial optimization — a domain where the loop is closed, the metrics are real, and the agent has nowhere to hide.

From there, the program scales up: agents that frame problems, agents that design experiments, agents that draft and review papers. Crucially, everything is built so that failure is preserved. A discarded hypothesis, in this framework, is not noise — it is evidence. Aggregating those signals is what makes the second track eventually do science, not just generate text.

Long-term, I am after an LLM-native publication venue: a place where the artifacts are not PDFs but structured proposals, reproducible runs, and machine-readable critiques; where agents review each other; and where humans walk in for the parts they enjoy and the decisions that need taste.

- Zhang, Sipling & Di Ventra (2026) — Scientific discovery as meta-optimization: a combinatorial optimization case study